Why Your AI Agent Needs MCP, Not Just APIs

2026-03-06 · BotsUP Lab · 6 min read

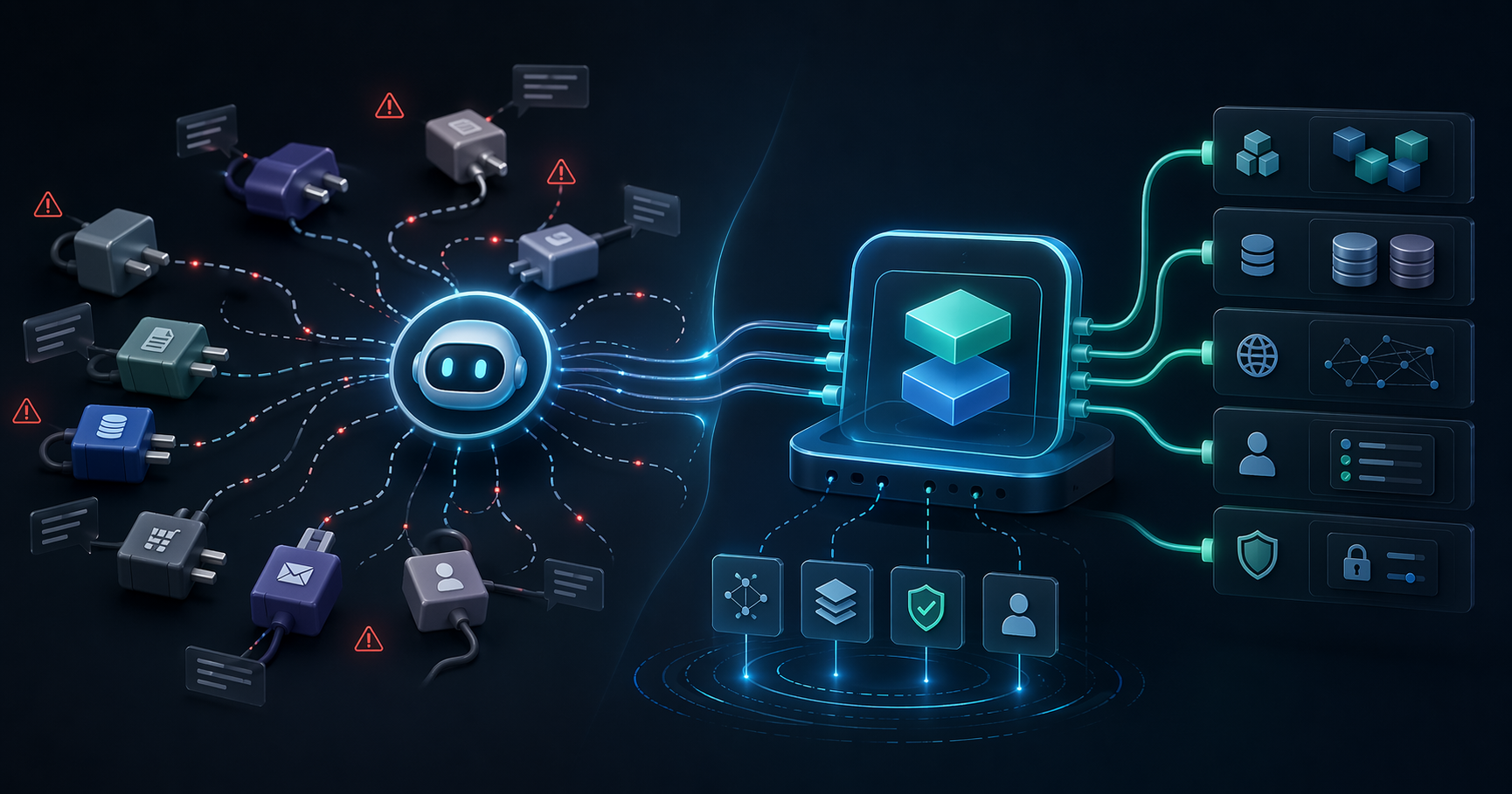

Many teams assume that if APIs already exist, AI integration is mostly done. In practice, production-grade agent workflows need a better capability layer than raw API access alone.

Summary

One of the most common questions in enterprise AI planning is:

We already have APIs. Why do we need MCP?

At first glance, it sounds reasonable. APIs already expose functionality, so why introduce another layer?

For simple prototypes, direct API integration can absolutely work.

The problem appears when teams try to scale beyond a single demo:

- Models struggle to choose the right tool reliably

- Tool descriptions are inconsistent

- Permissions are scattered

- Every new platform adds another custom adapter

- Documentation works for engineers, but not always for agents

That is why many AI projects do not fail because the model is weak. They fail because the tool layer was never designed for agent-first usage.

APIs are important, but they were not designed for agent-first workflows

Traditional APIs are typically designed for:

- Engineers reading documentation

- Application developers wiring flows explicitly

- Predictable client behavior

- A caller that already knows which endpoint to use

AI agents work differently.

They need to:

- Discover what tools are available

- Infer which tool fits the task

- Understand structured inputs and outputs

- Handle failures and retries intelligently

So the real issue is not just whether the capability exists. It is whether the model can understand and use that capability reliably.

MCP standardizes how agents discover and use capabilities

MCP should not be seen as a replacement for your APIs.

It is better understood as an agent integration layer on top of existing systems.

It helps turn APIs, internal tools, and data sources into something an AI system can discover, reason about, and use more predictably.

There are three major shifts.

1. From endpoint-centric to capability-centric design

Typical APIs expose endpoints such as:

POST /create-reportGET /customers/{id}PATCH /orders/{id}

That is useful implementation detail for developers.

For agents, the more important questions are:

- What task does this tool help with?

- When should it be used?

- What input does it expect?

- What outcome does it produce?

MCP makes it easier to present capabilities in a way that maps to task execution rather than raw endpoint structure.

2. From human-oriented docs to model-friendly structure

Many API docs are thorough for human readers but inefficient for models.

Agents perform better when they have:

- Clear tool descriptions

- Stable schemas

- Predictable error behavior

- Consistent semantics

Without that, the model may choose the wrong tool, misuse parameters, or retry in the wrong situations.

3. From one-off integrations to a governable capability layer

Direct integration looks fast when there is only one model and one workflow.

Enterprise reality changes quickly:

- Claude needs access

- ChatGPT needs access

- Cursor needs access

- Internal agents need access

- Different teams want different tools

Without a shared capability layer, the organization ends up maintaining many scattered adapters. MCP helps centralize that integration logic and governance.

What goes wrong when teams stop at APIs

Problem 1: the same capability gets wrapped repeatedly

A single internal API can end up being re-integrated across:

- Web applications

- Internal copilots

- Support agents

- Workflow automation

- Analytics assistants

Each new wrapper adds maintenance cost and increases the chance of inconsistent authorization.

Problem 2: models are left guessing

If your interface is only described in engineering terms, the model still has to infer:

- Which tool fits the current task

- When not to use it

- What preconditions matter

That uncertainty directly impacts tool call success.

Problem 3: governance becomes fragmented

As integrations spread, so do the places where permissions, scopes, logging, and access control are implemented.

The result is usually:

- Over-broad scopes

- Inconsistent token handling

- Weak audit visibility

- Harder rollout reviews

The stack may still “work,” but it becomes much harder to trust.

Where MCP is especially valuable

Not every environment needs MCP immediately, but it becomes especially useful when:

1. Existing systems need to support multiple AI platforms

If you already expect to support Claude, ChatGPT, Cursor, and internal agents, investing early in a reusable capability layer usually reduces future rework.

2. Governance matters as much as connectivity

As soon as you care about:

- who can call what

- which tenant sees which tools

- how incidents are investigated

- how tools evolve over time

you are already beyond simple raw-API integration.

3. AI is expected to participate in workflows, not just chat

The moment an agent needs to:

- retrieve data

- draft documents

- trigger downstream actions

- write back to systems

- coordinate across tools

you are working on agentic workflow design, not just a chat layer.

A practical way to think about it

In one line:

- APIs expose capabilities between systems

- MCP helps AI agents discover and use those capabilities safely and predictably

That is why this is not really an API-versus-MCP decision.

For most enterprises, the better model is:

Keep the APIs. Add MCP as the agent-ready integration layer above them.

A better rollout sequence

If you are planning enterprise AI integration, a useful sequence is:

Step 1: Decide which capabilities should become tools

Do not expose every API directly.

Start with high-value capabilities such as:

- repeatable lookups

- controlled document generation

- workflow steps with clear ownership

- internal functions with well-defined boundaries

Step 2: Design tools around use cases, not only endpoints

Think through:

- Is the tool name clear?

- Is the intended use case obvious?

- Is the schema agent-friendly?

- Are failure modes understandable?

Step 3: Add governance through a shared MCP layer

That layer should help handle:

- authentication

- authorization

- tool discovery

- logging

- rate limiting

- tenant isolation

Step 4: Connect the agent platforms above it

This keeps the capability layer reusable even as models and channels change.

Conclusion

Enterprise AI projects often start by assuming the model is the hard part.

In production, the harder question is usually how to make the model use business capabilities safely, reliably, and under control.

That is where MCP becomes valuable.

It does not exist to add jargon. It exists to reduce chaos as AI adoption expands across teams, workflows, and platforms.

If your goal is enterprise AI implementation rather than a one-time demo, MCP is usually worth considering earlier than teams expect.

Method and framing

This article looks at the problem from an enterprise rollout perspective rather than a protocol-first perspective. The focus is on what happens when teams try to scale AI integrations across tools, platforms, teams, and governance requirements.

FAQ

If we already have APIs, when does MCP become useful?

MCP becomes valuable when the same business capabilities need to be reused across multiple AI platforms and workflows with better consistency, governance, and discoverability.

Does MCP replace existing APIs?

Usually no. The more practical model is to keep existing APIs and add MCP as an agent-ready capability layer above them.

Do simple chat use cases need MCP?

Not always. But once the AI needs to read internal data, call tools, generate documents, or trigger workflows, MCP becomes much more relevant.

Want to evaluate whether your current APIs are MCP-ready?

BotsUP helps enterprises review internal APIs, tools, and data sources, then design MCP integration layers, authorization models, and production-ready agent workflows.

Related reading:

- Enterprise MCP in Production: An OAuth 2.1 Security Checklist

- AI Agent Adoption for Taiwan Enterprises: From POC to Launch

You can also review our work or book a consultation.