AI Agent Adoption for Taiwan Enterprises: From POC to Launch

2026-03-06 · BotsUP Lab · 7 min read

A practical rollout guide for Taiwan enterprises moving AI Agents from early proof-of-concept into real production workflows, governance, and adoption.

Summary

Many companies in Taiwan have already moved past the question of whether they should explore AI.

The harder question now is how to get from experiments and demos to systems that are trusted enough to be used in real workflows.

Most projects do not get stuck because the model is bad. They get stuck because:

- the first use case is poorly chosen

- KPIs are vague

- the AI never connects to actual business workflows

- governance and permissions are handled too late

- rollout and operations planning are missing

If the goal is not just an impressive prototype but a system that saves time, reduces manual work, and earns internal trust, then AI Agent implementation should be treated as an enterprise transformation effort, not just a technical trial.

Three common mistakes in early adoption

Mistake 1: starting with the most ambitious idea instead of the most stable one

Teams often want to begin with:

- autonomous multi-agent collaboration

- cross-functional decision automation

- fully self-directed enterprise assistants

Those goals are not wrong, but they are usually high-risk as a first rollout.

In practice, higher-success starting points tend to be:

- internal knowledge search

- support assistance

- document drafting

- report summarization

- structured process automation

These use cases are easier to measure, easier to control, and easier for business stakeholders to trust.

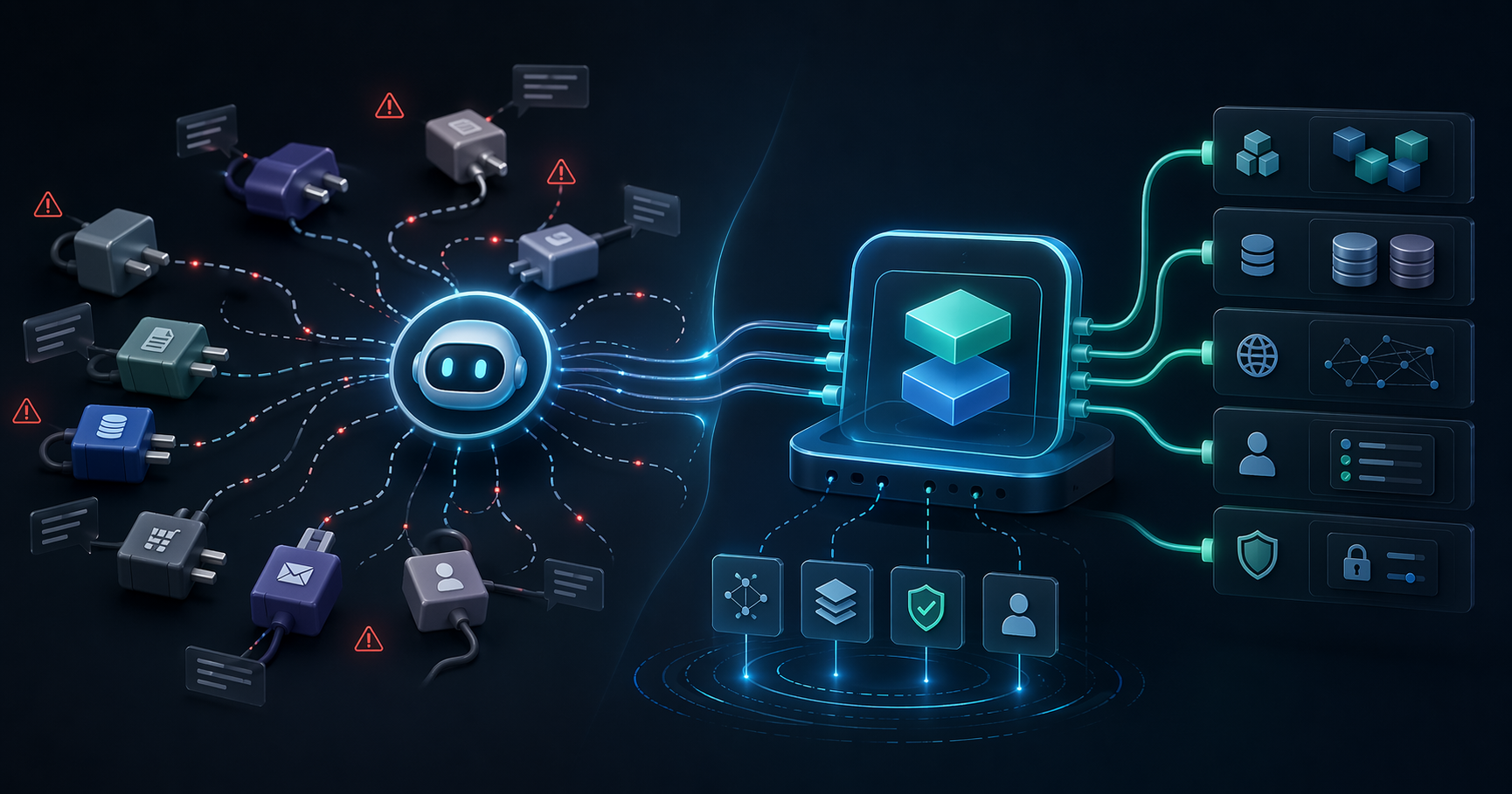

Mistake 2: building only a chat interface

If the AI can only answer questions but cannot:

- retrieve internal data

- generate useful outputs

- trigger a next-step workflow

- write back into the system

then the business impact often stays vague.

Real enterprise value usually appears when AI becomes part of the workflow, not just a conversational surface.

Mistake 3: assuming a successful POC means production is close

A proof of concept validates direction. It does not prove production readiness.

POCs often skip:

- permissions and roles

- auditability

- exception handling

- monitoring

- user training

- cross-team operating model

That is why many teams feel like the demo was easy, but the production rollout suddenly becomes difficult.

A more practical rollout path for Taiwan enterprises

Instead of asking “Should we do AI?” it is more useful to think in stages.

Stage 1: choose the right first use case

The first use case should be judged on three factors:

- How frequently does the task occur?

- How standardized is the workflow?

- How easy is the outcome to measure?

Good first-wave examples include:

- proposal drafting support

- support response assistance

- SOP and document search

- weekly summary generation

- structured intake and approval preparation

These are easier to validate and easier to champion internally.

Stage 2: make the POC prove more than model quality

A solid POC should test at least five things:

1. Is the workflow worth automating?

Some tasks are annoying but low impact. Others are repetitive and expensive enough that even modest improvement matters.

2. Is the data usable?

Agent quality depends heavily on data quality.

If information is fragmented, inconsistent, or poorly governed, the model will not rescue the workflow on its own.

3. Can the agent use tools reliably?

If production will involve internal systems or APIs, the POC should validate at least one minimal tool path, not only pure chat behavior.

4. Is the risk manageable?

Teams should define early which actions are:

- read-only

- draft-only

- approval-gated

- fully automated

5. Are success metrics clear?

Useful enterprise metrics include:

- reduction in handling time

- lower ticket or workload volume

- faster document creation

- shorter data lookup time

- user adoption and repeat usage

Without metrics, the POC may feel promising but still fail to earn budget.

Stage 3: move from POC to pilot through governance

After the POC, the next step is usually a pilot rather than full rollout.

The pilot exists to validate:

- whether people will actually use the system

- whether workflows stay smooth in real usage

- whether the system remains stable

- whether exception paths are manageable

At this stage, governance matters as much as technical quality.

Teams should be able to answer:

- Which data can the agent access?

- Which actions are permitted?

- Who reviews outputs?

- Who handles failures?

- How is activity recorded?

When those answers are clear during pilot, the production path becomes much less painful.

Stage 4: prepare production with integration, operations, and adoption

1. System integration

Production AI agents usually need to connect to:

- APIs

- document systems

- CRM or ERP

- support platforms

- reporting processes

- identity and access systems

That is one reason enterprise teams often move toward MCP, workflow orchestration, or shared internal tool layers instead of isolated chatbots.

2. Operational readiness

After launch, teams need:

- monitoring

- alerts

- access review processes

- prompt and tool versioning

- user feedback loops

AI systems behave more like products that need tuning than features that are simply “done.”

3. User adoption

Some technically successful projects still fail because usage remains low.

That often happens when users:

- do not know when to use the system

- do not trust the output

- feel it adds more steps than it removes

Rollout should therefore include:

- scenario-based training

- lightweight SOPs

- internal success examples

- team champions

What is especially true in Taiwan

There are a few patterns that show up repeatedly in Taiwan enterprise adoption.

1. Decisions are often cross-functional

AI initiatives frequently involve:

- IT

- security

- internal control

- business teams

- management

So the proposal cannot only talk about model quality. It needs to explain business value, integration design, risk, and rollout scope.

2. Teams are interested in AI, but cautious about failure in production

That means messaging, planning, and delivery all need to reinforce:

- security

- compliance

- readiness for launch

- real examples

- clear operating process

3. The real opportunity is workflow integration, not isolated model usage

Many companies already use ChatGPT or Claude individually.

What creates enterprise budget is usually not more standalone prompting. It is embedding AI into:

- department workflows

- internal knowledge and systems

- reporting pipelines

- approval and support processes

That is the shift from personal productivity to enterprise capability.

A realistic 3-6 month cadence

If you are planning an enterprise AI Agent initiative, a practical cadence can look like this:

Month 1: discovery and feasibility

- identify high-frequency workflows

- choose the first use case

- map systems and data dependencies

- define KPIs and risk boundaries

Month 2: proof of concept

- build the smallest viable flow

- validate model behavior and tool usage

- gather stakeholder feedback

Months 3-4: pilot

- connect to real data and limited live workflows

- add permissions, monitoring, and auditability

- create operational SOPs

Months 5-6: rollout

- expand integrations

- increase user coverage

- track ROI and adoption

This staged approach usually earns more trust than trying to launch too much at once.

Conclusion

The hardest part of enterprise AI adoption in Taiwan is not deciding whether AI matters.

It is finding a path from POC to production that is measurable, low-risk, and believable to the organization.

If the project is treated only as a model experiment, it often stalls.

If it is treated as an enterprise workflow integration effort, with use-case selection, governance, systems, and adoption planned together, the odds of real rollout improve dramatically.

The first project does not need to be the biggest. It needs to be clear, measurable, and easy for the organization to trust.

Method and framing

This article is written from the perspective of enterprise rollout planning in Taiwan. The emphasis is on how teams move from proof of concept to controlled production usage, not just how well a model performs in isolation.

FAQ

What makes a good first AI Agent use case?

The best first use cases are frequent, structured, and measurable. Internal search, draft generation, and support assistance are often better starting points than highly autonomous multi-agent systems.

Why is a strong POC still not enough for production?

Because production introduces permissions, auditability, monitoring, exception handling, and user adoption concerns that a demo often does not cover.

What slows down AI rollout most in Taiwan enterprises?

It is often not the model itself. Cross-functional alignment, security review, process ownership, and trust in the output are usually the bigger blockers.

Planning your enterprise AI rollout?

BotsUP helps teams identify the right first use cases, then design a path from POC to MCP integration, permissions, and production rollout.

Related reading:

- Why Your AI Agent Needs MCP, Not Just APIs

- Enterprise MCP in Production: An OAuth 2.1 Security Checklist

If you want to map the right starting point for your company, contact us.